New laws make play safer for minors

When the original Tomb Raider launched in 1996, it caused a stir. Not just for its gameplay, but for how its lead character was oversexualized. Regulators responded by limiting sales to teens and adults, and age checks were simple: if you didn’t have ID, you weren’t getting a copy. Fast-forward a few decades. Digital storefronts replaced counter clerks, and age gates became easy to skip. If you wanted to play Rise of the Tomb Raider in 2016, you mostly needed a payment card and a click.

Meanwhile, online play exploded. Today, roughly 618 million players are under 18. That’s about a quarter of all kids worldwide. These games serve as playgrounds for creativity and connection, but they also expose children to risks like inappropriate content, unmoderated chat, or impulsive purchases.

Those risks show up in the data. Pew’s 2024 survey of U.S. teens found that 43% of teen video-game players have been bullied or harassed while playing. Online sexual interactions are reportedly happening across gaming sites such as Roblox, Minecraft, and Fortnite. Algorithms can make things worse when they profile young players and surface content that isn’t right for them, or nudge them towards purchases.

Regulators are responding. New rules in the UK, Europe, and the US are raising the bar on age checks to curb unmonitored chat, addictive mechanics, and adult-themed content. Many of these requirements began taking effect from 2025 onward. But stronger age checks come with trade-offs. Some systems ask players to share sensitive information, such as IDs or credit card numbers, which is helpful for compliance but risky if not handled with care. And with requirements varying by region, global publishers now have to balance safety, privacy, and practicality.

This guide breaks down what’s changing, the risks to keep in mind, and what publishers can do next to protect young players while keeping their games open, fair, and fun for everyone.

Which laws apply to you?

Where do your players play, and what content do they interact with? Think about chat tools, social lobbies, user-built worlds, personalised skins, adult-themed scenes, in-game paid items, and content recommendation systems. Different countries regulate these features in different ways. Let’s take a look at some of the recent changes in the UK, EU, and the United States.

The UK

The Online Safety Act (OSA) tightened regulations for publishers with players in the UK. Its children’s code came into effect in July 2025, requiring publishers to protect children from harmful content or face fines of up to 18 million GBP or 10% of global annual revenue.

Who does it apply to?

If your game is available in the UK and appeals to children, this law applies to you – even if it’s rated 18+. Games are specifically called out when they have social features like chat, matchmaking, or user-generated content. Small game publishers must have the age checks too.

What checks are needed?

The OSA expects “highly effective” age assurance. Acceptable methods include photo ID checks, facial age estimation, mobile-network operator checks, credit card verification, or email-based age estimation. Simple “I’m over 18” tick boxes are not enough.

What else applies?

Publishers must assess their risks and create safer settings for minors. Examples include safer content recommendation algorithms that do not present children with harmful or adult content. The OSA also calls for strong moderation and easy tools to block, mute, and report other players and content.

Europe

The Digital Services Act (DSA) turns voluntary self-regulation into a mandatory, legal standard across Europe. In July 2025, the European Commission published guidance on how platforms should protect minors with meaningful privacy, security, and safety standards, including reliable age checks.

Who does it apply to?

The DSA applies to online platforms accessible to minors, with the exception of micro and small enterprises. If your game is playable in the EU and likely to attract children, this law applies to you. And you can’t opt out by claiming it’s age-restricted. Platforms with more than 45 million monthly EU users must meet the strictest obligations, and serious violations can lead to fines of up to 6% of global annual turnover.

What checks are needed?

While the DSA doesn’t say which age checks to use, it does ask for ‘reliable’ methods that respect privacy. This means verifying ages without collecting extra information. So while a simple “I’m over 18” tick box isn't enough, ID checks that store sensitive data aren’t great either.

What else applies?

The DSA asks online platforms to have better moderation tools and protective settings for minors5. For game publishers, this could mean private-by-default profiles, restricted chat features, clear labeling of in-game paid items, and limits on dark patterns that push players toward purchases like lootboxes or time-limited offers. Using profiling for targeted advertising is banned outright, which is a threat to publishers with ad-funded commercial models.

The United States

There’s a patchwork of laws across the US. COPPA, the Children’s Online Privacy Protection Act, is the main legislation and was updated in 2025 with stricter rules. These changes strengthen parental consent requirements for children under 13 and set clearer limits on how a company may collect or use a child’s personal data. Publishers must comply with new COPPA amendments by April 2026.

But it doesn’t stop there. Age verification laws that place responsibility on mobile app stores to verify users’ ages have been passed in states like Texas, Utah, Louisiana, and California, and game publishers must now prepare to receive those signals and respond to them.

Who does it apply to?

If your game is aimed at children under 13 (or you know they’re playing it) and you collect personal information, COPPA applies. Many free-to-play or multiplayer games fall under enforcement because they gather identifiers, game profiles, or chat logs. Violations are heavily penalised.

What checks are needed?

Before collecting data from a child, publishers must confirm that the adult providing consent is a parent or guardian. Methods can include credit card checks, knowledge-based checks, or verified email consent.

What else applies?

Parents must be able to review, delete, or revoke their child’s data. Companies must avoid sharing information with third parties without consent. Recent enforcement actions show regulators expect voice and text chat to be off by default for younger players, along with better purchase controls for parents, and ‘switching off’ dark patterns that deter players from cancelling or requesting refunds.

How are publishers implementing them?

Game developers are experimenting with a range of tools to meet new regulations worldwide. While a mix of solutions is being used, they all aim to make the process simple and safe for players.

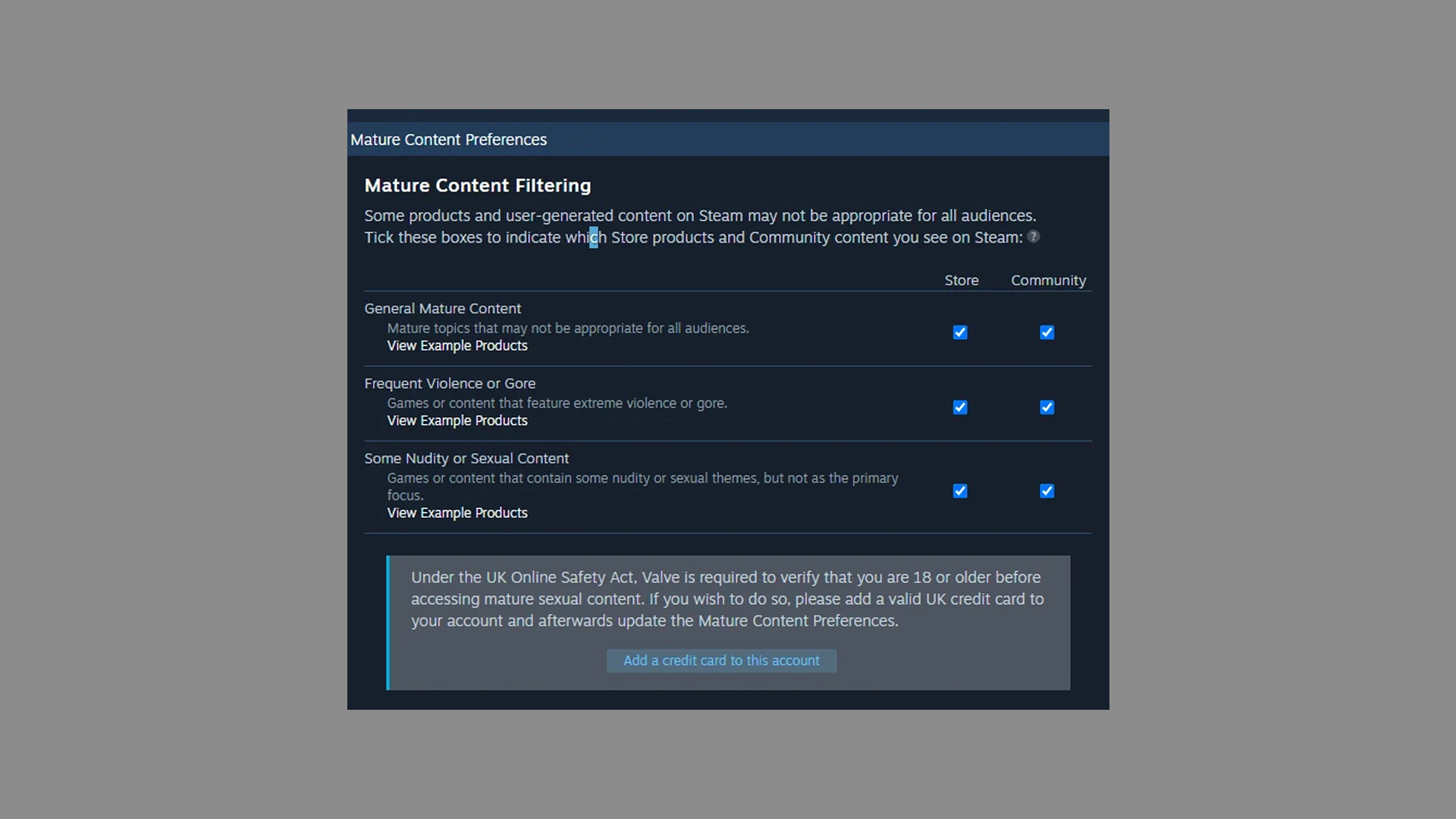

Credit card checks

To confirm an 18-plus status, some platforms ask players to submit credit card information. Countries like the UK consider this “highly effective” because banks handle age verification when issuing cards.

Who’s doing it?

Valve: Steam says it now requires a credit card to unlock mature content. No card means no access, and there’s no alternative age verification method for UK Steam users at the moment. Because the platform already stores payment information, it avoids handing IDs or selfies to outside vendors, which reduces certain privacy risks.

The verdict?

It works, mostly. Credit card checks can run into data protection issues. A breach could expose sensitive financial information. These checks also exclude adults without credit cards, including some low-income players. Teens can sometimes work around the system by using a shared family account or a virtual private network (VPN) to access content.

Facial age estimation (with no data retention)

You take a selfie, AI guesses your age, and then deletes it. Computer vision and machine learning estimate a user’s age from facial features, without requiring IDs or credit cards. Once a player’s age range is known, a platform can set features accordingly, such as limiting chat to similar age bands.

Who’s doing it?

Roblox: Roblox claims it is the first big platform to make facial age checks mandatory to access chat features. Users are assigned an age bracket (9-12, 13-15, 16-17, 18-20, or 21+) and can only chat with people in the same or an adjacent bracket. “This innovation supports age-based chat and limits communication between minors and adults”, says Roblox.

Xbox: Xbox says it's rolling out age verification for UK users via the Yoti service, which can verify ages with a selfie or official documents. Unverified accounts will lose full access to some social features in 2026, such as voice or text communication.

The verdict?

Age estimation is fast, frictionless, and more inclusive than ID or credit card checks. But it can also be fooled by a determined teenager with TikTok filters. AI can misjudge ages, especially near key thresholds like 18. Some players worry about what’ll happen with their data if there’s a breach, such as the 2025 Discord hack that exposed 70k selfies and government IDs.

Zero-knowledge proof of age

Zero-knowledge proof of age, or ZKP, lets users confirm they are over a certain age without revealing who they are. A trusted issuer provides an age credential that lives in a digital wallet. When a player needs to prove they are over 18, the wallet returns only that result, not a name or full birth date.

Who’s doing it?

No major gaming platform uses ZKP yet. Standards are still forming, and the EU is piloting prototypes. The European Commission has described it as a future ‘gold standard’ and plans to integrate it with its Digital Identity Wallet in 202612.

The verdict?

ZKP checks promise stronger privacy and meet many goals set by UK and EU laws, but the ecosystem is still developing. Issues like shared accounts could still remain. If a parent’s credential sits in a child’s account, the child may appear to be an adult. ZKP is a strong confirmation tool but not a full safety solution on its own.

Restricted accounts

Restricted accounts limit certain features until a user verifies their age or receives parental approval. Chat, in-game spending, downloads, and marketing often remain locked until consent flows are completed.

Who’s doing it?

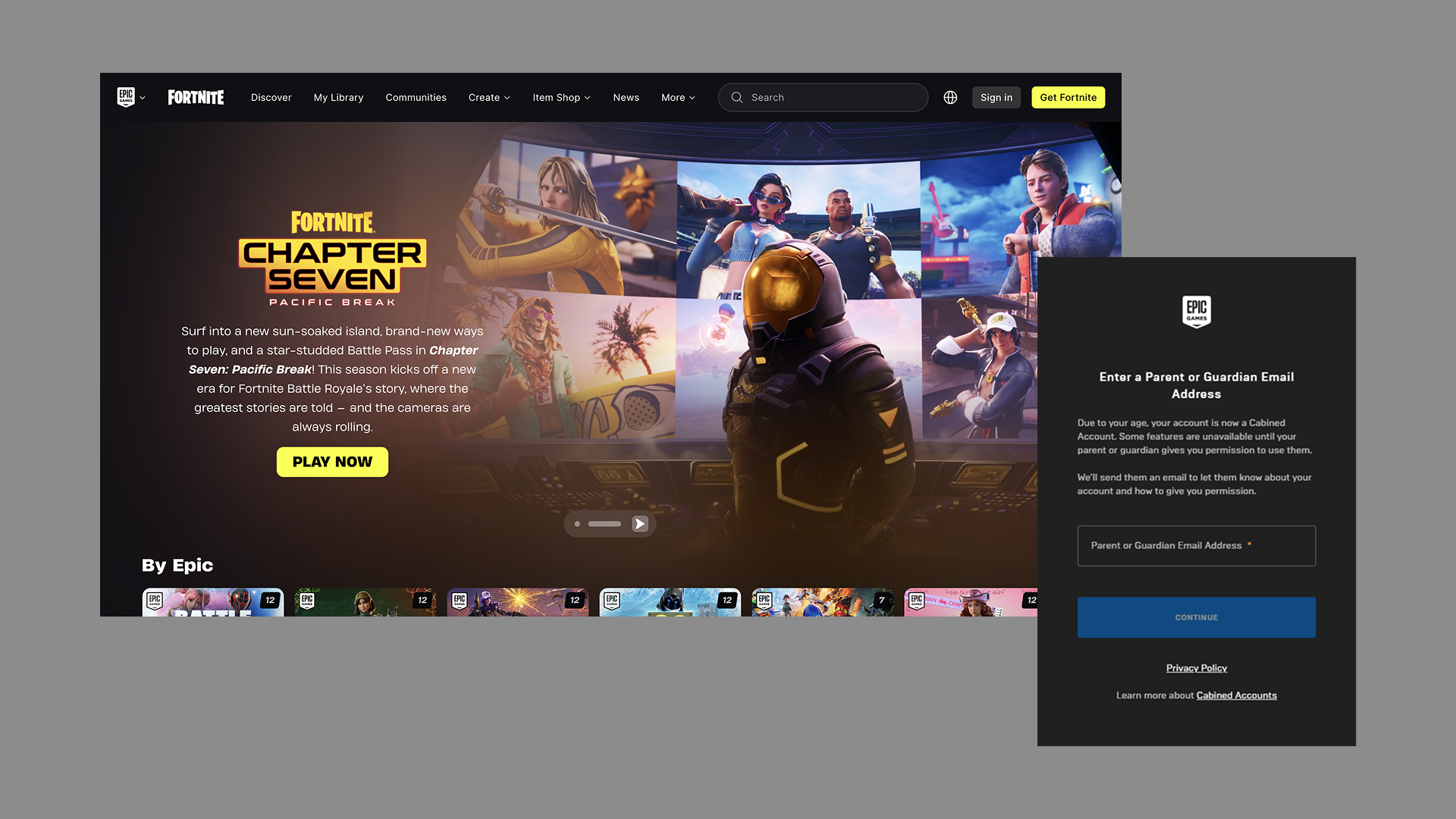

Epic Games: Epic Games announced “Cabined Accounts” for Fortnite, Rocket League, and Fall Guys in 2022. Once a person is deemed underage, their account is restricted until parental consent is given. While cabined, players can still access and play games, but social features and purchases are limited. If parents give consent, they can choose which features to unlock.

Others: While Epic is leading the way, other publishers like PlayStation, Nintendo and Xbox have similar features, which limit social and spending features unless parental consent is given.

The verdict?

Restricted-by-default accounts could become a common way to meet new rules. They build safety into the design rather than adding checks afterwards. Players can enjoy the game while limits stay in place, and parents stay in control.

Community moderation

Online multiplayer games can expose children to hate speech, grooming, and adult content, especially in open chat or user-created spaces. Community moderation often blends self-reporting, automated systems, and human review to remove harmful content and block dangerous users.

Who’s doing it?

Riot Games: League of Legends publisher Riot Games says it uses an honor system to incentivize good behavior with perks like BXP bonuses, skins, and special content. In turn, it punishes bad behaviour by reducing rewards and limiting communication privileges, or banning the player outright. Players can block and report immediately, while machine-learning and text-evaluation models, as well as human moderators, analyse behaviour and enforce the rules.

Supercell: Supercell has an in-chat ‘tap to report’ feature across all of its games, with some games allowing a user to report a player directly by tapping their profile. It uses a mix of moderation features, saying “while much of our systems are automated, we also employ trained moderators who focus on leaderboards, tournament participants, and community-sourced reports”.

The verdict?

Moderation is essential but challenging. Human teams provide careful oversight but cannot scale to every match or message. Reporting tools and reputation systems help communities regulate themselves, while automated systems offer speed and scale. A strong ID system, such as player accounts and an owned data infrastructure, can enable better player experiences by tracking player reputation and history across different games and touchpoints in the publisher’s universe.

How to build games for everyone, everywhere

If you publish games globally, you face a patchwork of rules. Some countries require strict ID checks, while others require lighter age estimation, or ban core commercial features such as loot boxes or targeted ads for specific age groups. It’s a lot to keep track of.

The UK, Europe, and the United States will start enforcing new age verification laws soon, if they don't already. While no specific tool is recommended globally, ‘effective’ age verification is needed. This leaves publishers to decide which checks to use, and almost all involve sharing sensitive information. While new ‘gold standards’ like ZKP checks promise stronger privacy protections, the ecosystem is still developing.

At Reaktor, we help publishers meet global legislation and protect children without limiting their freedom. It starts with investing in robust data infrastructure and owned ecosystems, such as well-structured player ID and account systems. Once you’ve got that, you can begin building complex features like family accounts, age-banded experiences, and cross-game moderation systems.

By implementing rules in a central hub, publishers can avoid duplicating logic across multiple games. And by separating that logic from the game code into web services, they can enable faster updates and reduce the risk of non-compliance. Emerging SaaS technologies make it easier to do just that. They can help meet legal requirements by keeping track of global legislation and automatically toggling features on and off for each region. These features include in-game chat, spending limits, targeted ads, adult content, lootboxes, and other dark patterns.

In an era where age verification laws can change faster than the app store approval process, quickness is key. At the same time, hasty implementation can backfire. A solid data foundation makes everything easier, from consent flows to regional compliance. It’s one of the best long-term investments a publisher can make to protect young players and comply with tomorrow’s laws.

Get started with a practical guide for gaming companies.