When I originally started working on the html2canvas project, I was trying to create a 3D representation of the webpage using WebGL. While I did end up getting a very elementary version created, it occurred to me that the real value of the project (if there was any) was in fact with a 2D representation of the webpage, or as some would call it, a “screenshot”. That’s where the project originally got started and the 3D rendering target eventually died off.

If you are familiar with browser render trees, and how they are formed, quite a lot of similarities can be found with html2canvas. The html2canvas library creates the “screenshot” of the page by evaluating the DOM. It iterates through every node on the page, evaluates the computed styles such as position, dimensions, borders, border-radius, colors, backgrounds, z-index, floats, overflows, opacity etc. Using this information, it forms a render queue which simply consists of calls to the canvas element, such as “draw shape with n-coordinates and fill it with x-color”. The canvas doesn’t eat CSS, so each CSS property that applies a style you want to render, needs to be implemented separately and as such, the projects scope is really never ending.

The fact that the text rendering methods available with canvas are slightly limited makes things just a bit more complicated. You can’t for example set letter-spacing when rendering text, so to apply the effect, you’ll need to calculate the position of each letter on a page manually and render them separately. Considering calculating the position of the text isn’t trivial to begin with, and requires different approaches depending on the browser, it does have performance implications.

Another problem area lies with rendering images. You can render most images to a canvas without a problem, but if the images aren’t from the same-origin as the page, they will end up tainting the canvas. In other words, the canvas won’t be readable anymore (getImageData/toDataURL). This in fact isn’t even limited to cross-origin images, but for example with Chrome, SVG images taint the canvas as well. To work around this problem, the library can attempt to sniff whether the image taints the canvas and ignore it if it does, or use a proxy to load the image so it can be safely drawn. The images can also be attempted to be loaded with CORS enabled, but unfortunately images are rarely served with the required headers.

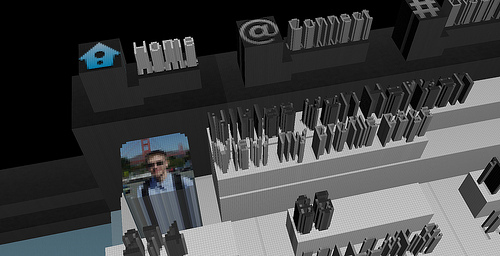

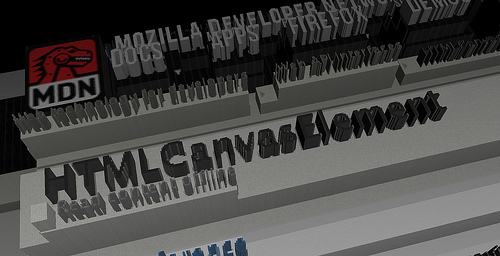

With the render queue formed, its just a matter of applying the queue onto a canvas element and you’ll get your 2D “screenshot” of the webpage. However, the purpose of this blog post was to illustrate how to create a 3D representation of the webpage. It is in fact very easy to form the 3D representation using the screenshot as a diffuse map and just creating a heightmap using the same rendering queue we formed earlier. However, instead of using the calculated colors, you swap them to a color based on the DOM tree-depth of the element that formed the render item.

Using the heightmap, we can then form the appropriates vertices and triangles based on where the depth changes. I decided to pre-calculate the vertex positions instead of doing it within the vertex shader to avoid forming unnecessarily many vertices. While forming the vertices, each vertex will be assigned a color based on the pixel color from the diffuse map. For the vertices forming the vertical faces (i.e. depth differences), I applied a shadow depending on the direction of the face, to slightly give it a feel of some lighting.

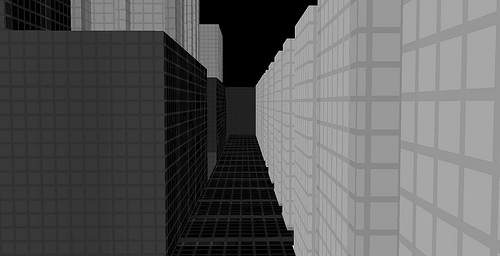

To form a better view for distance and to add artificial detail to the page, I formed the fragment shader to draw squares on the faces, to give the illusion of them being constructed of small cubes (1 cube is approximately 1×1 pixel). Additionally, a small fog is applied in the fragment shader as well to give the depth even further clarity, as it may not be as evident with single colored faces.

For the physics and collision detection I set up a very simple detection based on the heightmap map data which just checks whether the camera is on the floor and whether it crosses a boundary that has a higher heightmap level than where it currently resides.

When considering what the library goes through to form 3D representation of a webpage, I personally find it quite amazing how fast some of the browsers are able to process it. Keeping in mind that the library could be evaluating thousands of nodes, tens of thousands of letters, for each, processing lots of different CSS properties that can result up to hundred thousand calls to the canvas drawing context. From there, it forms the geometry which can easily end up of consisting over a million vertices, with half a million triangles, which it dumps into the GPU and a full 3D representation of the webpage pops up within a second or two.

Of course the type of page and the amount of content impacts a lot on the performance, as well as the type of computer/browser you use and how many images needs to be pre-loaded prior to parsing the DOM. If you want to give 3D browsing a go, I’ve added it to my own homepage, and its accessible with the hashtag #3d.

If you want to try it on your own page, you can get the built script from here or view the sources at GitHub.

Please keep in mind the image cross-origin limitations. If you wish to try the script without hassle on any page, you can use it through this chrome extension, which works around the cross-origin image issue.

If injecting unknown scripts into your browsers console is your thing, here is a snippet ready for you:

(function(d) { var s = d.createElement("script");

s.src = "http://hertzen.com/js/domfps.min.js?v2";

d.body.appendChild(s); })(document);

Or you can execute it on this page by clicking here.