Are you planning on doing a quick prototype or demo in Python that utilizes geospatial plots, and want to use a library to do the heavy lifting? There are loads of different libraries to choose from, and it can be hard to know which one’s right for you.

I faced this problem a while ago, and ended up trying my demo with two different libraries: GeoPandas and Plotly. If you’d like to know what worked out, what didn’t, and which one you should choose, this is for you.

If you were building a full-on system into production, the smart move would be to separate the computation from the graphics, for example by running the computation with Python, and providing the frontend solution with React. However as I was just building a prototype by myself, this wasn’t feasible: I had neither the time to learn React myself, nor the resources to employ the help of a frontend specialist to do it for me. So I had to use a language that I’m familiar with – Python.

Making a quick demo is often valuable. Whether it’s for internal prototyping, pre-sales, or refining analyses, a demo can enable quick iteration with stakeholders who are not data scientists themselves. If a picture is worth a thousand words, a demo must equate to a short novel. Personally, I find that people are much more interested in discussing things with images and playing with demo systems, rather than talking about abstract equations and ideas. If a data scientist can produce a demo on their own, it can help immensely in facilitating fast iteration with ML and AI models.

The prototype: using public data to model the similarity of neighborhoods

First, let me provide a quick example of what my prototype was meant to do. The use case I set out to solve was pretty simple: Let’s say you’re moving from one area in Finland to another. You like the area you currently live in, but don’t know much about the place you’re heading to. For example, you currently live in Kallio, the hipster-friendly neighborhood of Helsinki that features lots of cafés and young professionals, and you’re looking to move to Jyväskylä. Which neighborhood would you feel most at home in? Where is the Kallio of Jyväskylä? If you haven’t spent much time in Jyväskylä, you probably have no idea.

I wanted to find out how the system could automatically provide a shortlist of candidate neighborhoods which would be similar (with similarity computed by an AI model) to the current location of the user. And technically, how do I build a usable, interactive demo with relatively little effort?

Essentially, the demo system needed four parts:

- Data about neighborhoods and their attributes

- An algorithm that defines which neighborhoods are similar

- A plot engine to render the results in graphical form

- A user interface to query the user

For a demo, I could have done just the first three parts, and presented results as a few precomputed scenarios. However, testing how sensible the recommendations are turned out to be difficult. If the system recommends places I’ve never been to, how am I supposed to evaluate that recommendation? And since I have only lived in a few neighborhoods, my personal experience only allows for a limited number of test cases. This is why I decided to incorporate a UI into the demo which would help me gather more test data from my colleagues.

Getting data to base the model on was quite easy. Statistics Finland has a very good repository of public data, including a database called PAAVO, which contains vast amounts of data about the different postcode areas in Finland. Statistics Finland has gathered all kinds of information about demographics, types of houses, and the number and distribution of jobs and income – all on the level of individual postcodes. Perfect! I just had to clean up the data, and impute some missing information. From a data-wrangling perspective, this was all quite easy.

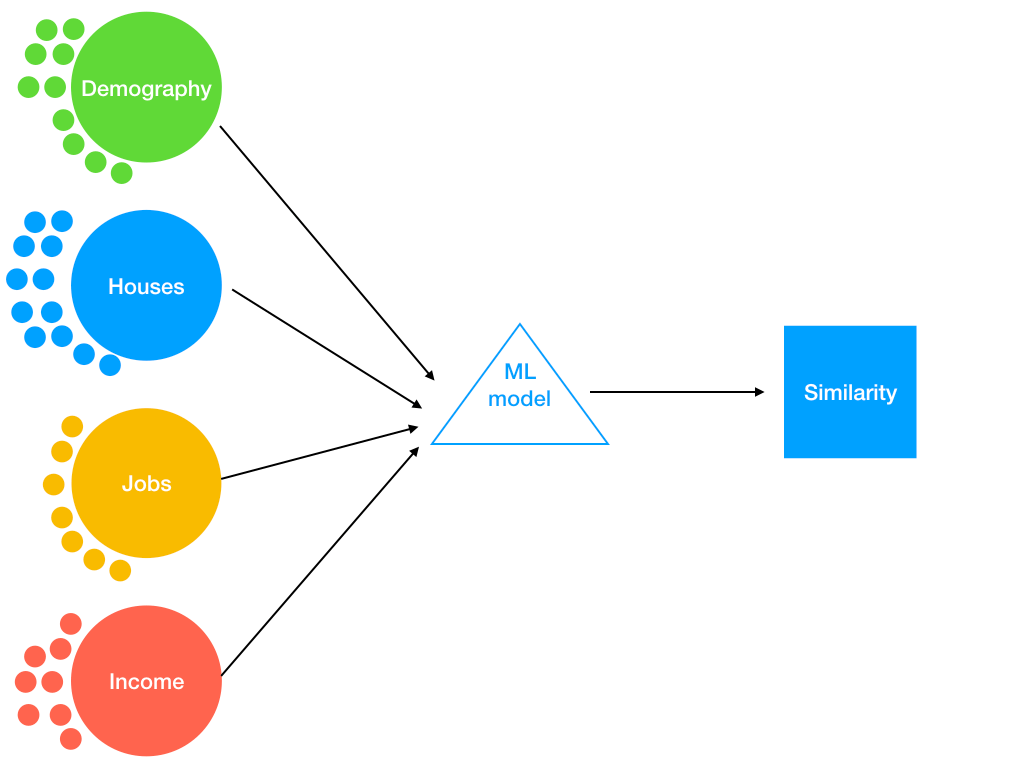

The image below displays a schematic of the system. After the clean-up, the PAAVO data included 37 variables that I ended up using. The data broadly represents four themes: demography, housing, jobs, and income statistics about all the postcode areas of Finland. These data can be fed into an AI model, which then uses an algorithm to determine how “similar” one postcode area is to another. Essentially, areas that have demographically similar inhabitants; the same distribution of apartments, single-family houses and summer cottages; number of jobs; and level of income on individual and family level, are similar to each other. Notably, the database does not include any data about the number of businesses, parks, and so on. If I were creating a real service, I would probably try to gather such data from other sources, e.g. Google Maps. In this demo case, however, I thought that the included variables provide reasonable proxies for many interesting excluded variables. For example, number of jobs in the service industry is likely to be heavily correlated to the number of cafés in the area.

Image 1: A schematic of the system, with data from the PAAVO database being fed into a model that produces similarity metrics. The small circles represent the number of included variables for each topic.

Choosing an ML model that is both fast and intelligible

The next issue was the ML model. For the demo, I wanted something that is at least somewhat interpretable instead of a black box, and also fast to compute. There’s practically no limit to how much time you can spend on trying and developing fancy models, so I wanted to stick to something that’s simple enough to run. Explainability of the similarity measures was important, so that I could at least to some extent analyze why the system thinks two areas are similar. Like I said before, it’s already difficult enough to interpret the quality of the recommendations, even without bringing model complexity into the picture.

First, I tried linear discriminant analysis (LDA), but I didn’t manage to get satisfactory results. In the end, a classic principal component analysis (PCA) worked quite nicely. PCA is a non-domain-specific and theory-agnostic model, which just assumes that the data have been measured without error. This is in contrast to (confirmatory) factor analysis, which assumes that there are a certain number of latent variables behind the measured indicators, which themselves include measurement error. Factor analysis is suitable for cases like experimental research, when you have a prior understanding of the theoretical model that results in the gathered data. However in this case, there is no theoretical reason to prefer, say, three factors over four, so PCA makes more sense.

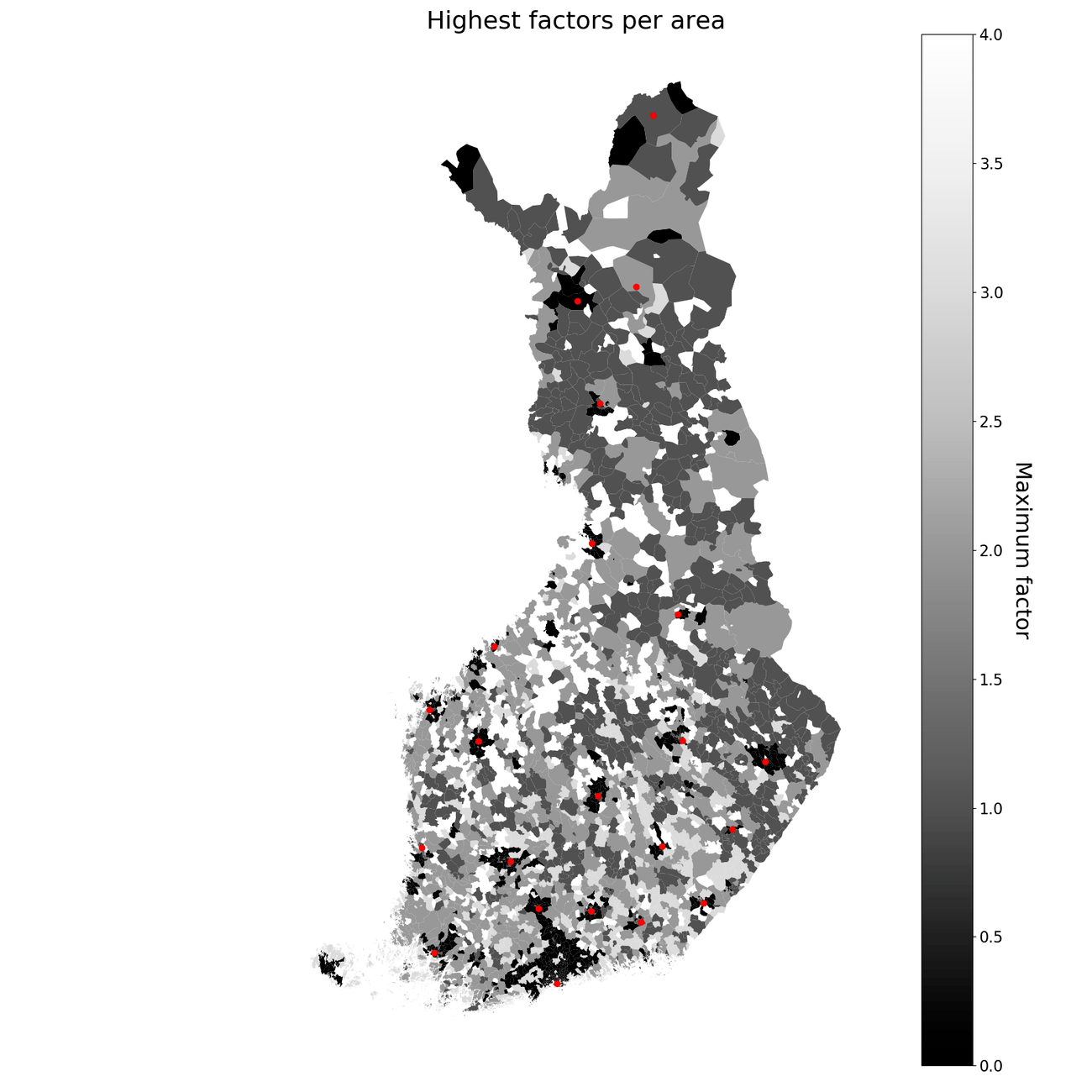

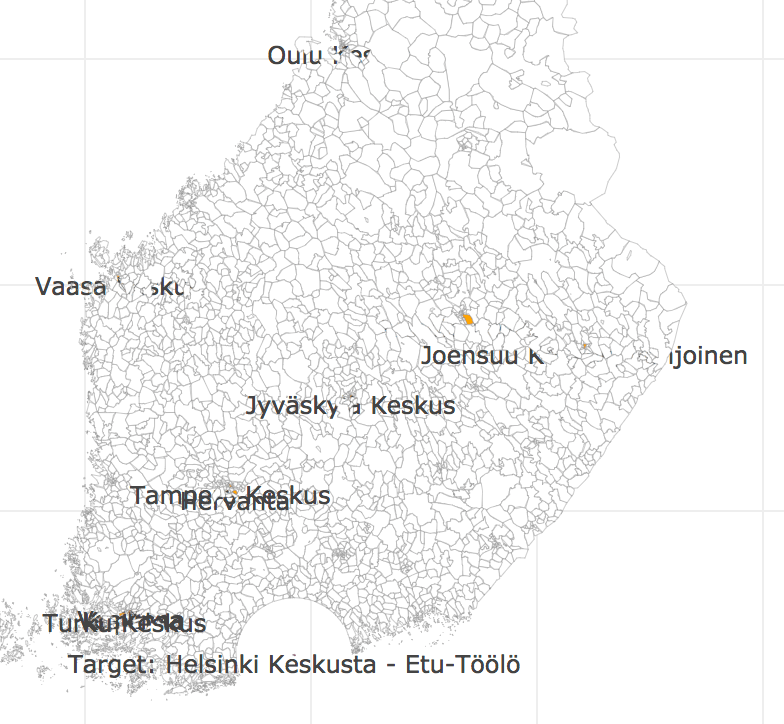

The final model was a five-dimensional PCA, which managed to separate cities from the countryside without an issue. To interpret the principal components’ meaning, it’s possible to take a look at their loadings (i.e. which variables contribute to which factor getting a high score). The factors in this model aren’t super obvious, but there are some clearly emerging themes, like many apartments vs. other types of houses, large income vs. small income, families vs. no kids, and many young females (i.e. typically student areas). In the image below – taken straight from the first version of the demo – you can see a map in which each area is colored according to the highest scoring principal component (so there are five colors, each representing one component). I’ve also marked most major cities on the map. Clearly, cities tend to have the same component with the highest value, while rural areas have one of the other components. The figure is a good sanity check: If the model couldn’t separate cities from the countryside, the recommendations would have little chance of making any sense.

Highest factor for each postcode area, showing that the separation of cities and countryside works. Major cities are marked with a red dot.

Drawing plots: Which tool should you choose?

When dealing with geographical data, it’s often easier to comprehend through images – that is, maps. This means that the next step for my demo was to choose a plotting library. There are several libraries available, from really low-level polygon manipulation with Shapely and Matplotlib to more high-level libraries designed specifically for geospatial data. As it is specifically a geospatial library I chose to start with GeoPandas, and used that in a Jupyter notebook to get the first iteration of the demo. Later, I switched over to Plotly to be able to define the plots together with interactive UI elements.

Plotting using GeoPandas

The plot in the drawing above was drawn using the geospatial library GeoPandas. Using the GeoPandas library was easy: essentially, I combined the area polygons (available from Statistics Finland) and the PAAVO data about areas into one GeoPandas DataFrame. Then, I just called the plot method, and told it which variable to use for coloring the areas:

When it comes to plotting, it really doesn’t get much easier than this. Often plots seen in tutorials involve lines and lines of matplotlib code to make the end result look good. GeoPandas knows to look for the ‘geometry’ column inside your GeoDataFrame, and uses that to plot the polygons.

For a while, the demo I showed to colleagues was this in a Jupyter notebook, using variables defined in a code block to change the input arguments of the model. While this works technically, it’s not very efficient for gathering user feedback. For each iteration, I would have to either personally type the name or postcode of the area they currently live, or have the other person write it in the code. Sometimes, a test user would make a typo in defining one of the arguments, and the model wouldn’t run. I found myself wishing for an interface with a list and some buttons that the users could operate. Next, I set out to do just that.

I first tried to do this with ipywidgets, and technically it worked fine. Working inside a Jupyter notebook, one cell would create the dropdown lists, and clicking one of them would start the model and render the resulting plot in the same notebook. However, with this system I wasn’t able to fit everything nicely on the same screen, and the user had to scroll back and forth if they wanted to run the model again. In that sense, this turned out to be even worse from a usability perspective than just using code blocks. With the Jupyter notebook, I couldn’t draw the buttons and the plot side-by-side, so the plot didn’t fit on the same screen with the code and dropdown lists.

Plotting using Plotly

Feeling frustrated with the UI, I thought that there must be something in Python that would be similar to RStudio’s Shiny. I stumbled upon Plotly’s Dash, which promised easy drawing of interactive plots with lists and buttons. Perfect! Or so it seemed.

It turned out that I couldn’t just use the plotting functions I had defined to use with GeoPandas. No, Plotly has its own. Even worse, it has its own schema for the data representation. I was able to translate the data into the new schema, but to be honest it took quite a while. This was the first time I was switching to Plotly in the middle of a project, which exacerbated the problem by quite a bit. Just goes to show that if you have to switch technologies in the middle of a project, you’ll likely encounter difficulties that you haven’t faced before.

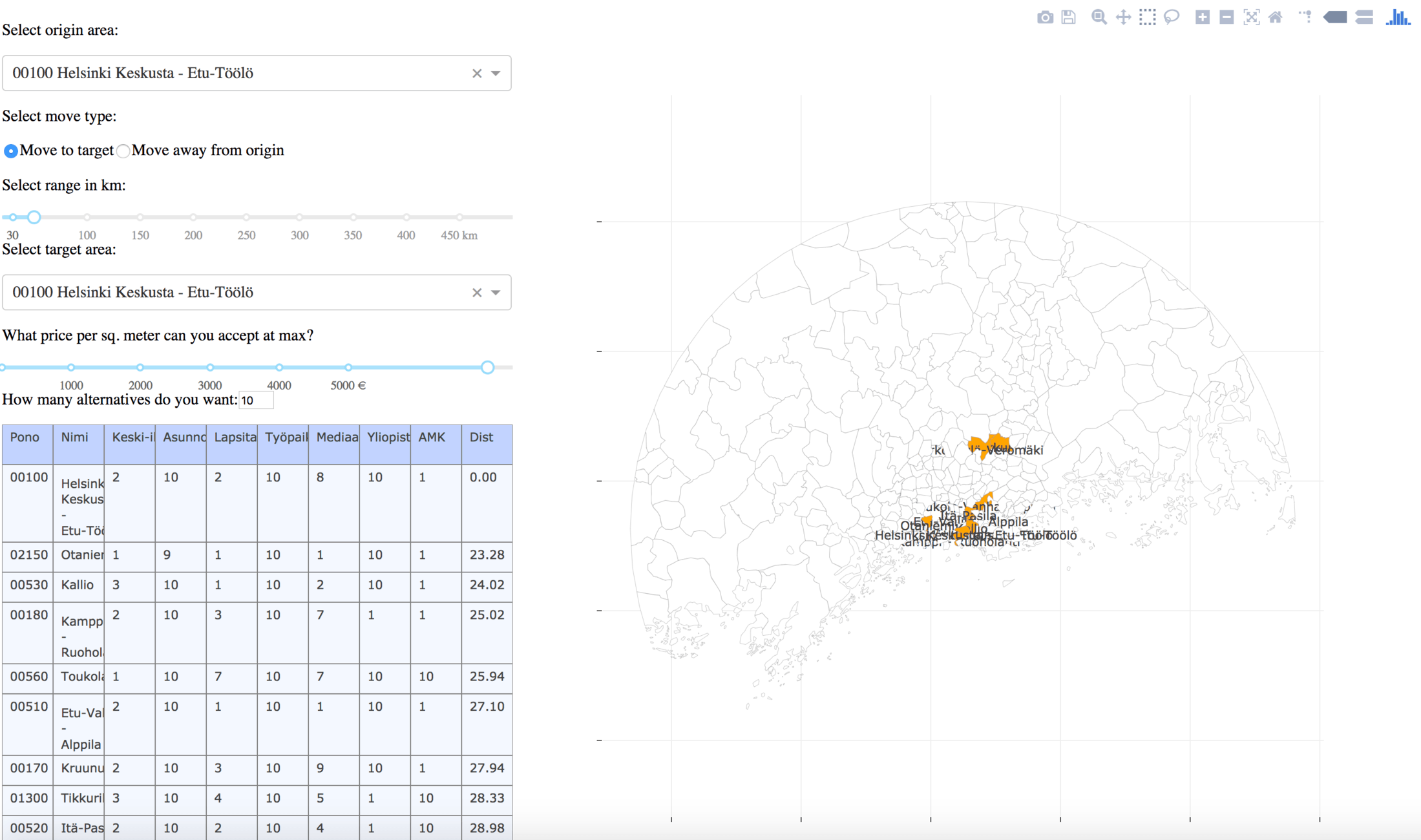

Once I had the data in the right format, it was pretty smooth sailing to define the UI elements on one side of the screen, and the plot on the other side. Then I just connected the UI elements to a function that would update the graph. I also added a table that helped debug the system, as I could check that the model was fetching the correct pieces of data from the system in the correct order.

Screenshot of the demo UI done with Plotly.

GeoPandas vs. Plotly: who wins?

So – GeoPandas vs. Plotly, which is better? The answer of course is “it depends”. Both did some things well and were weaker in other areas. This means that you should pick your library depending on what you’re trying to build.

For the purposes of the demo, Plotly was really nice: I could define both the UI and the plot purely in Python, without having to learn another language. Styling could have been done using CSS, which I didn’t do – and it obviously shows in the end result. The demo worked more or less how I wanted; however, there were two major issues.

First, rendering the polygons was much slower than with GeoPandas. Drawing all the 3,000 postcode areas of Finland took only 5 seconds for GeoPandas, but over 8 seconds for Plotly. I wasn’t able to figure out where the difference comes from, and I was unable to bridge that gap. Obviously, a real system in production would use React or similar for the drawing, but this is a point that’s worth mentioning.

Second, there were some quality issues with polygons and annotation in Plotly. Whereas GeoPandas worked pretty much straight out-of-the-box with a few lines of code, I was never quite satisfied with how Plotly drew some items. For example, the annotations keep randomly disappearing somewhere, despite my repeated efforts to ensure that they would appear on top of the polygons. I would have also liked to draw the polygons in one layer, and then just the fill colors and annotations on top, but I could never get this to work with Plotly.

Labels of areas kept disappearing in Plotly.

So which one would I pick for my next project? If you desperately want interaction with the user, I think Plotly is a sound choice. Creating the UI elements with it was very easy and convenient. However, if your data has a lot of polygons that need to be drawn (and you can’t use Plotly with mapbox), I would stick to GeoPandas. It’s wonderful to use for wrangling geospatial data, and the plots looked surprisingly good considering the very little effort I put into them.

If you’d like to know more about how the model was made, you can check out the source code in Github. Naturally, you’re also welcome to get in touch with any ideas or recommendations regarding this demo, or the application of geospatial plotting in Python.